Expanding the .Net Core API by Violating the Geneva Convention

The .Net Core 1.1 API, and by extension the .Net Standard 1.x APIs, grew out of what I feel was a very noble and optimistic goal: streamlining the API surface. However, due to some combination of language inertia, enterprise customers, and a lack of appetite for library porting, the end state of the API has some strange rough edges. The reflection API, specifically, went in some gyrating directions before settling into what is very close to what exists already, having lost some of the elegance of the proposed (and implemented) differences between Type and TypeInfo.

In the late game rush to get as usable an API surface as possible, it was inevitable that some APIs would be missed in the chaos. It was the API for Encoder and Decoder that would send me down a fairly large rabbit hole on this occasion, more specifically the pointer (yes, pointer) based overload to Convert().

Yet Another TCP Server

In the past couple of weeks, I've been roughing out a gRPC-esque microservice library to take advantage of the lessons that we, as the .Net developer community, have learned over the course of watching and participating in the building of ASP.Net Core. The HTTP server Kestrel (shout out to Ben Adams, David Fowler, and others) really got into the nitty-gritty of what it takes to truly hone performance in a C# setting. Not surprisingly, the results look not-too-dissimilar from the techniques you would use when coding high-performance servers in the classic languages.

In other words, avoid allocations at all costs, pool your memory, and don't copy your buffers all over the place. I'm glossing over some other pretty serious tricks, but that's the gist. The unsafe keyword can be your friend, if you know what you're doing and treat it nicely.

In the spirit of Kestrel and projects like Netty, I've been framing up a ultralight event-loop based TCP server on top of the truly awful SocketAsyncEventArgs based Socket API. While not quite as portable as something like a libuv wrapper, this API nevertheless is a pretty raw wrapper around IO Completion Ports on Windows and epoll on Linux. I'm running up against framework allocations now, but still managing about 115k requests per second.

The .Net Core Kestrel team has added System.Net.Sockets as a transport for their server now though, and it looks like the plan is to drive perf improvements for Core 2.0 with that change and leave libuv behind. I'm starting to look at it myself, although there is a lot to be aware of when hacking on corefx, and the build setup is a bit quirky still.

UTF-8 Strings, You Performance Nightmare

One of the early issues one runs into when starting to think about designing a wire format is just what character encoding to use. In the CLR, strings are represented internally as UTF-16. However, the interwebs protocol designers at large have decided that UTF-8 is the way to go, for a number of reasons, some of which are historical, some not. Under typical circumstances, UTF-8 also takes less space, which is a distinct advantage when talking about data transfer.

The problem is, when living at the edge of performance, converting strings to UTF-8 can easily become a bottleneck. This is most apparent when writing a HTTP server, where almost all the data is character based, but it can be a problem in any protocol. The framework, thankfully provides a very highly optimized set of classes to deal with this encoding.

The usual example given in these cases goes something like this:

public class Writer

{

private readonly NetworkStream _networkStream;

public Writer(NetworkStream networkStream)

{

_networkStream = networkStream;

}

public async Task WriteStringAsync(string item)

{

var bytes = Encoding.UTF8.GetBytes(item);

await _networkStream.WriteAsync(bytes, 0, bytes.Length);

}

}

Now, as you can see already, this is not an especially fast way to go. You're allocating a byte array for every string you pass to this method. And, under the hood, the stream may be doing some allocations and management of its own. If you're in the high-performance domain, you'll probably want to have (and likely already have) your own buffer on hand that you'll be writing to.

Keeping Those Buffers Alive

What would it look like if we wrapped a buffer that we can recycle?

public class WriteableBuffer

{

private byte[] Buffer { get; }

private int _currentPosition;

public Writer(int bufferSize)

{

Buffer = new byte[bufferSize];

}

public void WriteString(string item)

{

_currentPosition += Encoding.UTF8.GetBytes(item, 0, item.Length, Buffer, _currentPosition);

}

public void Reset()

{

_currentPosition = 0;

}

}

This is better. We've written directly to a buffer we have on hand, which we can pass directly to Socket.Send() later on at our discretion. Some allocation happens inside GetBytes(), but it's somewhat unavoidable. However, we have another problem, which astute readers may have already spotted. What if the string is too long to fit in the buffer we happen to have on hand? Unfortunately, the UTF8Encoding class is no help here. There are no overloads to tell you how many characters you already wrote, just how many bytes. However, the Encoder class can.

public class WriteableBuffer

{

public byte[] Buffer { get; }

private int _currentPosition;

private Encoder _encoder = Encoding.UTF8.GetEncoder();

public Writer(int bufferSize)

{

Buffer = new byte[bufferSize];

}

public int WriteString(string item, int offset, bool reset)

{

_encoder.Convert(item, offset, item.Length - offset, Buffer, _currentPosition, int.MaxValue, reset,

out var charsUsed, out var bytesWritten, out var completed);

_currentPosition += bytesWritten;

return charsUsed;

}

public void Reset()

{

_currentPosition = 0;

}

}

Now, we're getting somewhere. We have a WriteString() method that allows us to be able to tell how many characters of the string we passed in actually made it to the buffer. And if that number is less than our total number of characters, we can always go write out the buffer to our Socket, reset the WritableBuffer, and continue writing the string where we left off.

Unfortunately, I lied a bit. An overload of Convert() that takes a string directly actually doesn't exist at all. You actually need to do something different.

public int WriteString(string item, int offset, bool reset)

{

var chars = item.ToCharArray();

_encoder.Convert(chars, offset, chars.Length - offset, Buffer, _currentPosition, int.MaxValue, reset,

out var charsUsed, out var bytesWritten, out var completed);

_currentPosition += bytesWritten;

return charsUsed;

}

Well, in the words of The Dude, this is like, a bummer, man. We just spent all this time trying to figure out how to avoid allocating byte buffers all over the place, but now we've replaced it with a call that allocates a character array. So, the performance we gained has now been erased in a trail of tears and sadness. Except, perhaps not. We have a different overload at our disposal.

public int WriteString(string item, int offset, bool reset)

{

unsafe

{

fixed(char* charPtr = item)

{

fixed(byte* bytePtr = &Buffer[_currentPosition])

{

_encoder.Convert(charPtr, offset, chars.Length - offset, bytePtr, int.MaxValue, reset,

out var charsUsed, out var bytesWritten, out var completed);

_currentPosition += bytesWritten;

return charsUsed;

}

}

}

}

This overload of Convert() will happily accept pointers instead of arrays, which is immensely helpful to us here. We don't have to allocate anything to get access to the string in the way that the Encoder needs. So, it has direct access to the memory location where both the string and the buffer live, both of which have been pinned with the fixed keyword. However, this code has a bug, and it's an enormous one.

Unsafe Code is Unsafe

The Convert() methods require you to specify the maximum amount of bytes the encoder should attempt to write. Before, we used int.MaxValue, because we had passed in the buffer as a byte array and that particular overload handles the bounds checking for us. However, now we're in unsafe territory writing into a memory location, and all bets are off. In fact, this code we've written allows effectively a string of any length to be written to our memory position, potentially corrupting memory beyond the length where our buffer ends. This is a classic buffer overrun vulnerability, and I've included it specifically to illustrate how careful you need to be when writing unsafe code.

The fix in this case, though, is pretty straightforward:

_encoder.Convert(charPtr, offset, chars.Length - offset, bytePtr, Buffer.Length - _currentPosition, reset,

out var charsUsed, out var bytesWritten, out var completed);

We just need to let Convert() know how many bytes it is allowed to write, and we're good to go. So, our problem appears to be solved. The fixed keyword adds a non-zero amount of overhead, but it is very tiny. This is as close to the metal as you can get here, and there really aren't any more performance improvements to be had.

Except, I have bad news again. This overload does not exist in .Net Core, only in the full framework.

Whatcha Talkin' About, Willis?

Yes, that's correct. The overload that takes pointers does not exist in .Net Core at all. I'm not really sure how it got missed, and it will be incorporated in .Net Core 2.0, but for 1.x and the accompanying .Net Standard APIs, we don't get it. What can we really do here?

- Create our own UTF8 encoder

- Copy out the current encoder into our project and expand the API

- Abuse reflection in a way that makes you kinda queasy

I opted for the 3rd option. Creating my own UTF8 encoder is extremely non-trivial and would not have nearly the testing surface of the provided class, which is every framework user who has ever used this class. I did briefly consider the second option, but System.Text.Encoding is a never-ending sea of interconnected internal classes and separating out what I actually needed from it also turned out to be not particularly straightforward.

You see, the Encoder class is actually an abstract class. When we call Encoding.UTF8.GetEncoder(), what we actually get is an instance of the internal class EncoderNLS that in turn wraps an instance of the UTF8 encoding logic itself. However, since that class is internal, and GetEncoder() returns it to us as the base class Encoder, we have limited options.

To the Source Code, Watson

Of course, because we can actually see the source code of the various .Net frameworks these days, we have the ability to trace and find ourselves some kind of route in to the EncoderNLS class via reflection. Here's the source of the char[] overload of EncoderNLS.Convert().

// This method is used when your output buffer might not be large enough for the entire result.

// Just call the pointer version. (This gets bytes)

[System.Security.SecuritySafeCritical] // auto-generated

public override unsafe void Convert(char[] chars, int charIndex, int charCount,

byte[] bytes, int byteIndex, int byteCount, bool flush,

out int charsUsed, out int bytesUsed, out bool completed)

{

// Validate parameters

if (chars == null || bytes == null)

throw new ArgumentNullException((chars == null ? nameof(chars): nameof(bytes)), SR.ArgumentNull_Array);

if (charIndex < 0 || charCount < 0)

throw new ArgumentOutOfRangeException((charIndex < 0 ? nameof(charIndex): nameof(charCount)), SR.ArgumentOutOfRange_NeedNonNegNum);

if (byteIndex < 0 || byteCount < 0)

throw new ArgumentOutOfRangeException((byteIndex < 0 ? nameof(byteIndex): nameof(byteCount)), SR.ArgumentOutOfRange_NeedNonNegNum);

if (chars.Length - charIndex < charCount)

throw new ArgumentOutOfRangeException(nameof(chars), SR.ArgumentOutOfRange_IndexCountBuffer);

if (bytes.Length - byteIndex < byteCount)

throw new ArgumentOutOfRangeException(nameof(bytes), SR.ArgumentOutOfRange_IndexCountBuffer);

Contract.EndContractBlock();

// Avoid empty input problem

if (chars.Length == 0)

chars = new char[1];

if (bytes.Length == 0)

bytes = new byte[1];

// Just call the pointer version (can't do this for non-msft encoders)

fixed (char* pChars = &chars[0])

{

fixed (byte* pBytes = &bytes[0])

{

Convert(pChars + charIndex, charCount, pBytes + byteIndex, byteCount, flush,

out charsUsed, out bytesUsed, out completed);

}

}

}

You'll notice that the array based version really just does some contract verification and bounds checking, then passes on to the pointer based overload, which does the actual work.

// This is the version that uses pointers. We call the base encoding worker function

// after setting our appropriate internal variables. This is getting bytes

[System.Security.SecurityCritical] // auto-generated

public unsafe void Convert(char* chars, int charCount,

byte* bytes, int byteCount, bool flush,

out int charsUsed, out int bytesUsed, out bool completed)

{

// Validate input parameters

if (bytes == null || chars == null)

throw new ArgumentNullException(bytes == null ? nameof(bytes): nameof(chars), SR.ArgumentNull_Array);

if (charCount < 0 || byteCount < 0)

throw new ArgumentOutOfRangeException((charCount < 0 ? nameof(charCount): nameof(byteCount)), SR.ArgumentOutOfRange_NeedNonNegNum);

Contract.EndContractBlock();

// We don't want to throw

m_mustFlush = flush;

m_throwOnOverflow = false;

m_charsUsed = 0;

// Do conversion

bytesUsed = m_encoding.GetBytes(chars, charCount, bytes, byteCount, this);

charsUsed = m_charsUsed;

// Its completed if they've used what they wanted AND if they didn't want flush or if we are flushed

completed = (charsUsed == charCount) && (!flush || !HasState) &&

(m_fallbackBuffer == null || m_fallbackBuffer.Remaining == 0);

// Our data thingies are now full, we can return

}

The only reason we can't get to this version of the Convert() method is because the base class, Encoder, hasn't specified this particular signature, and thus that signature is not available in the EncoderNLS class, which extends it. However, as we can see, the code clearly exists and is already being exercised. So, we're going to use some not-usually-recommended tricks to gain access to it.

Use Stupid Techniques, Win Stupid Prizes?

Let's first spike the things we need to to "pretend" that this overload already exists on the Encoder class. Well, that's pretty easy to do with an extension method.

public static class EncoderExtensions

{

private static Action<Encoder, char*, int, byte*, int, bool, int, int, bool> ConvertInternal;

public static unsafe void Convert(this Encoder encoder, char* chars, int charCount,

byte* bytes, int byteCount, bool flush,

out int charsUsed, out int bytesUsed, out bool completed)

{

ConvertInternal(encoder, chars, charCount, bytes, byteCount, flush,

out var charsUsed, out var bytesUsed, out var completed);

}

}

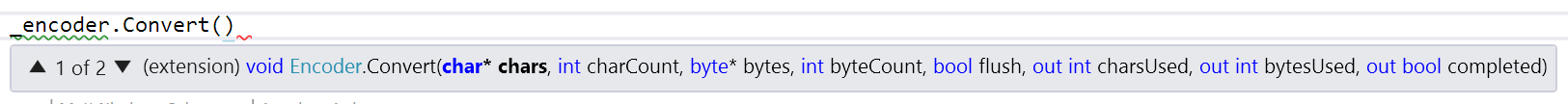

We've done a couple of things here. We've made a private static generic delegate that we'll later build using reflection to get at the internal pointer based method overload in EncoderNLS. Additionally, we've set up an extension method on the Encoder class to "extend" the API to add the new signature. Now, we have something that looks normal from an API perspective, and would come up very nicely with code completion:

But, we have a problem. Pointer types are not allowed as type parameters and out parameters cannot be specified on a generic delegate type like Action. Action<byte*> is not allowed and neither is Action<out int>. So we need to get a little old school and specify an actual delegate type.

public static class EncoderExtensions

{

private delegate void ConvertDelegate(Encoder encoder, char* chars, int charCount, byte* bytes,

int byteCount, bool flush, out int charsUsed, out int bytesUsed, out bool completed);

private static ConvertDelegate ConvertInternal;

public static unsafe void Convert(this Encoder encoder, char* chars, int charCount,

byte* bytes, int byteCount, bool flush,

out int charsUsed, out int bytesUsed, out bool completed)

{

ConvertInternal(encoder, chars, charCount, bytes, byteCount, flush,

out var charsUsed, out var bytesUsed, out var completed);

}

}

Actually Creating the Delegate

If you've ever worked with the reflection API and delegate creation before, you would probably do this as a first pass.

private static ConvertDelegate CreateDelegate()

{

var parameterSignature = new[]

{

typeof(char*),

typeof(int),

typeof(byte*),

typeof(int),

typeof(bool),

typeof(int).MakeByRefType(),

typeof(int).MakeByRefType(),

typeof(bool).MakeByRefType()

};

var method = Encoding.UTF8.GetEncoder().GetType().GetRuntimeMethod("Convert", parameterSignature);

return method.CreateDelegate(typeof(ConvertDelegate));

}

There are a few interesting tidbits in here. First is the MakeByRefType() call on the last three parameters. This is required because we need to somehow let the framework know that we're expecting a ref type variable here and not just a normal int or bool. These last three are out parameters, so are passed by reference.

However, the second tidbit to note is that while this will compile just fine, it's going to blow up at runtime. The ConvertDelegate delegate type specifies Encoder as it's first parameter. However, the type that we just pulled this MethodInfo from is actually EncoderNLS, which we don't have access to as it is internal. We can't specify it anywhere, because from our perspective that type doesn't exist.

private delegate void ConvertDelegate(EncoderNLS encoder, char* chars, int charCount, byte* bytes,

int byteCount, bool flush, out int charsUsed, out int bytesUsed, out bool completed);

If we were in a perfect world where the internal keyword didn't exist, we could do the above. However, we aren't, and we can't. So we need to bring in heavier guns.

So Very Expressive

What we really need is some way of casting Encoder to EncoderNLS as a kind of a runtime cast. A runtime cast, you say? Well that is simply not possible! As in most things C#, impossible things become possible when you start speaking at near the IL level.

//this

var encoder = Expression.Parameter(typeof(Encoder), "encoder");

var castEncoder = Expression.Convert(encoder, Type.GetType("System.Text.EncoderNLS"));

//is effectively this

encoder => (EncoderNLS)encoder

In this code, we've used .Net Expressions to create an expression that casts our encoder instance to the EncoderNLS type. As a side note, the strings passed in to Expression.Parameter() have no particular meaning in the generated code. They're just there to give you visibility into the names of your parameters and variables. Now, obviously this is not really a complete method, and you couldn't return the cast encoder to the caller, because they still don't know about the EncoderNLS type at compile time. But, if we're still in the expression generated code, we can do whatever we want with this cast instance, including calling methods on it.

public static ConvertDelegate CreateDelegate()

{

var parameterSignature = new[]

{

typeof(char*),

typeof(int),

typeof(byte*),

typeof(int),

typeof(bool),

typeof(int).MakeByRefType(),

typeof(int).MakeByRefType(),

typeof(bool).MakeByRefType()

};

var method = Encoding.UTF8.GetEncoder().GetType().GetRuntimeMethod("Convert", parameterSignature);

var encoder = Expression.Parameter(typeof(Encoder), "encoder");

var chars = Expression.Parameter(typeof(char*), "chars");

var charCount = Expression.Parameter(typeof(int), "charCount");

var bytes = Expression.Parameter(typeof(byte*), "bytes");

var flush = Expression.Parameter(typeof(bool), "flush");

var charsUsed = Expression.Parameter(typeof(int).MakeByRefType(), "charsUsed");

var bytesUsed = Expression.Parameter(typeof(int).MakeByRefType(), "bytesUsed");

var completed = Expression.Parameter(typeof(int).MakeByRefType(), "completed");

var methodCallParams = new Expression[]

{

chars,

charCount,

bytes,

byteCount,

flush,

outCharsUsed,

outBytesUsed,

outCompleted

};

var castEncoder = Expression.Convert(encoder, Type.GetType("System.Text.EncoderNLS"));

var methodCall = Expression.Call(castEncoder, method, methodCallParams);

return Expression.Lambda<ConvertDelegate>(call, new [] { encoder }.Concat(methodCallParams)).Compile();

}

There's a lot going on there. Let's break it down step by step. The first part, which you've already seen, just grabs the MethodInfo from the EncoderNLS class for the overload that we want to be calling. The next batch of lines calling Expression.Parameter() are setting up the input parameters to the method that we're going to be generating. In this case, we want them to match the ConvertDelegate delegate signature, because we're trying to generate a method with that signature.

We then create an array of some of those parameters to pass into the Convert() call on the EncoderNLS instance, make an expression to cast the instance to EncoderNLS, and finally make an expression to call the Convert() method with those parameters.

The last line compiles into IL the lambda expression that the Expressions represent. So, what we've built here is a theoretical lambda expression that looks like this:

(Encoder encoder, char* chars, int charCount, byte* bytes,

int byteCount, bool flush, out int charsUsed, out int bytesUsed,

out bool completed) =>

((EncoderNLS)encoder).Convert(chars, charCount, bytes, byteCount,

flush, out charsUsed, out bytesUsed, out completed);

That becomes a ConvertDelegate delegate type, and as a result we're getting close to the end of our solution.

public class EncoderExtensions

{

private delegate void ConvertDelegate(Encoder encoder, char* chars, int charCount, byte* bytes,

int byteCount, bool flush, out int charsUsed, out int bytesUsed, out bool completed);

private static ConvertDelegate ConvertInternal = CreateDelegate();

public static unsafe void Convert(this Encoder encoder, char* chars, int charCount,

byte* bytes, int byteCount, bool flush,

out int charsUsed, out int bytesUsed, out bool completed)

{

ConvertInternal(encoder, chars, charCount, bytes, byteCount, flush,

out var charsUsed, out var bytesUsed, out var completed)

}

private static ConvertDelegate CreateDelegate()

{

var parameterSignature = new[]

{

typeof(char*),

typeof(int),

typeof(byte*),

typeof(int),

typeof(bool),

typeof(int).MakeByRefType(),

typeof(int).MakeByRefType(),

typeof(bool).MakeByRefType()

};

var method = Encoding.UTF8.GetEncoder().GetType().GetRuntimeMethod("Convert", parameterSignature);

var encoder = Expression.Parameter(typeof(Encoder), "encoder");

var chars = Expression.Parameter(typeof(char*), "chars");

var charCount = Expression.Parameter(typeof(int), "charCount");

var bytes = Expression.Parameter(typeof(byte*), "bytes");

var flush = Expression.Parameter(typeof(bool), "flush");

var charsUsed = Expression.Parameter(typeof(int).MakeByRefType(), "charsUsed");

var bytesUsed = Expression.Parameter(typeof(int).MakeByRefType(), "bytesUsed");

var completed = Expression.Parameter(typeof(int).MakeByRefType(), "completed");

var methodCallParams = new Expression[]

{

chars,

charCount,

bytes,

byteCount,

flush,

outCharsUsed,

outBytesUsed,

outCompleted

};

var castEncoder = Expression.Convert(encoder, Type.GetType("System.Text.EncoderNLS"));

var methodCall = Expression.Call(castEncoder, method, methodCallParams);

return Expression.Lambda<ConvertDelegate>(call, new [] { encoder }.Concat(methodCallParams)).Compile();

}

}

Don't Do This

This is not normally something I would recommend. And, I still don't really recommend it. However, the code for .Net Core 1.x is fixed at this point, so this solution represents a relatively safe way of getting access to an internal method that we already know exists. And thankfully, this all becomes obsolete when .Net Core 2.0 is released.

But for now, I had to do unspeakable things. I'm waiting for my call from the UN security council any day now.